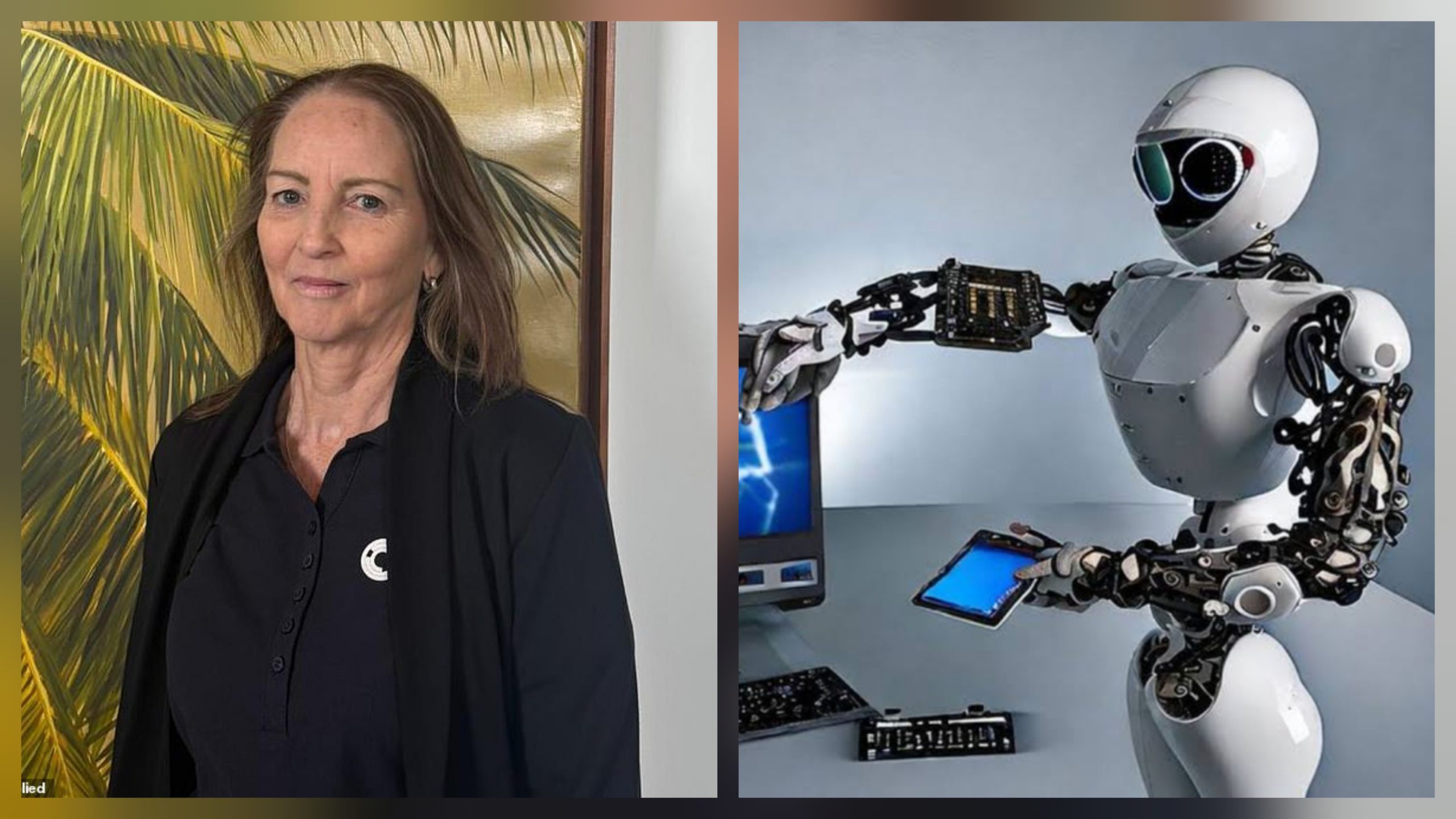

An Australian bank worker has claimed she was made redundant after unknowingly helping train artificial intelligence (AI) that later replaced her role.

Kathryn Sullivan, 63, said she was dismissed in July after 25 years of service as a teller with the Commonwealth Bank of Australia (CBA). She explained that part of her final duties involved scripting and testing chatbot responses for the bank’s “Bumblebee AI,” stepping in whenever the bot failed to answer customer queries.

“I was completely shell-shocked, alongside my colleague,” Sullivan said. “We just feel like we were nothing, we were a number. Inadvertently, I was training a chatbot that took my job.”

She added that the bank failed to communicate with her for over a week after her dismissal:

“They ghosted me for eight business days before they answered any of my questions.”

Although Sullivan said she supported technological innovations that improved customer service, she argued that AI should not be allowed to replace human workers without clear regulations.

Following customer backlash and a spike in calls after the redundancies, CBA admitted it had mishandled the process and reversed its decision, offering affected staff their roles back. However, Sullivan declined the offer, saying the new position offered to her was different from her original role and lacked job security.

A bank spokesperson later apologised, acknowledging that its assessment of the 45 affected roles “did not adequately consider all relevant business considerations.”

“We have apologised to the employees concerned and acknowledge we should have been more thorough in our assessment of the roles required,” the spokesman said. “We are currently supporting affected employees and reviewing our internal processes to improve our approach going forward.”

Sullivan’s case highlights growing tensions between technological advancement and job security, with calls for stronger regulations to ensure AI complements rather than replaces human workers.